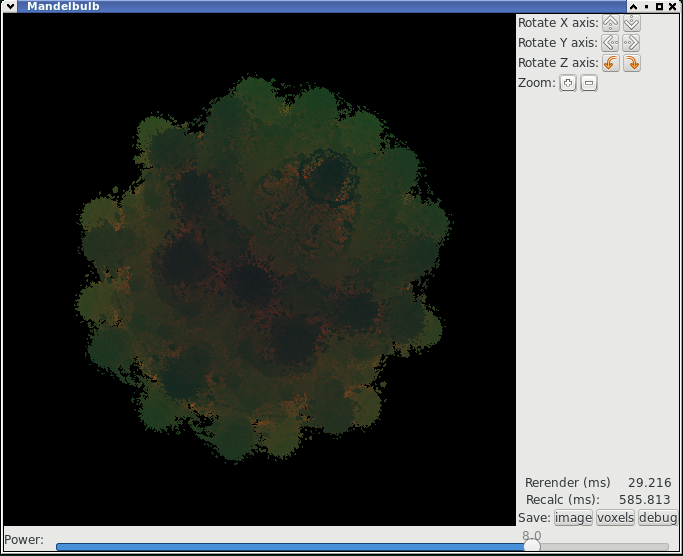

For a while I'd thought about learning OpenCL, and a couple of accidental ;-) purchases of new hardware meant I now had GPUs that were useful to play with. While I was at it I decided to learn Gtk-rs which is a Rust binding for the Gtk+ GUI library. Reusing an earlier project to plot a mandelbulb gave me the embarrassingly parallel problem that a GPU could help with.

The OpenCL system isn't too complex; you run kernels that the system executes in parallel across arrays of data and you can wait for them to finish. The kernels are compiled at program runtime for the GPU that your system has (typically using something like llvm in the case of Mesa based GPUs on Linux). The source for the mandelbulb kernel started off as my earlier C code with only a few changes to turn it into an OpenCL kernel; the function declaration is slightly special. The function gets called for each point, and the OpenCL system provides the get_global_id and get_global_size calls for the kernel to figure out where it is in the array; I use these to produce a -1.0-1.0 range for each dimension.

I started off with the OpenCL called from OpenCL C++ wrappers, but they were horrid (probably worse than the pure C), and I soon moved to Rust and the ocl crate. Outside of the kernels the Rust code allocates the voxel and image buffers, calls the kernels, waits for the results and grabs the output. I'm not using any of the cleverer features, I've got a simple voxel buffer and am implicitly waiting for the result rather than being clever about the queuing. I should probably look at using OpenCL 'image' buffers, but I was told that Mesa might not like them much.

The main kernel gives me a voxel array, so now hmm how to plot those; I'd been thinking about OpenGL but to do that

I'd have to generate a mesh from the voxels; the simpler way was to do a simple ray trace using a separate kernel.

The messiest part of this is passing data (e.g. eye position and orientation etc) which ends up as a separate array with fairly arbitrary

indices. I did some simple lighting based off the angle between the eye and a lightsource. I did wonder about something cleverer - but

then I started thinking whether a 3d fractal actually has a surface normal?

Gtk-rs isn't that hard, but there are a couple of gotchas that took me a while to understand. Firstly the image that's displayed is a Gtk Image and you can back this with a Cairo ImageSurface, and that works nicely, but once it's bound to the widget to be displayed, you can't keep hold of it as a mutable reference to allow you to update it - this is Rust being safe, even in the case where it's just image data. The solution here is that you can unbind the Cairo ImageSurface from the display witdget with set_from_surface(None) then get a reference to the ImageSurface you can change with get_data(), update it and then pass the ImageSurface back to the Image with set_from_surface again.

The other bit I found hard was getting events to work in Gtk-rs; again Rust's lifetime system gets in the way; the lifetime of event handlers is badly defined, so you end up with really messy reference counted ( Rc<RefCell<..>>) objects to pass into the event handlers, and structuring the data so you only have one or two objects to pass in to make this less painful.

Searching for docs is a bit tricky as well, because the base Gtk docs don't give hints of where to look, and the Gtk-rs docs are mostly just about the binding without giving too many clues to these type of problems.

I used Nalgebra to do transforms on eye/light/view port locations. It's got all the matrix and vector maths in you can want, and it can do the simpler transforms I wanted as well.

Rotations and zooms just change the parameters to the ray tracer, doing rotations of the eye etc and changing the range, but don't recalculate the voxels, this makes them pretty fast. Changing the power parameter does a full reculate.

It seems to be working fine on my RX550 Radeon, and someone with an Nvidia card said it was happy. The Radeon 7550 in my laptop doesn't seem as happy and tends to get a few corrupt blocks at the end of the image; it's suspected this might be a problem waiting for the GPU to finish. I'd had that with the C++ code as well, so I suspect this is a Mesa/kernel bug.

The animation at the top was generated by manaually using the save image after each step.

You can find the code here and it's also (with a possibility of being in flux) on my GitHub.

(c)David Alan Gilbert 2018

mail: fromwebpage@treblig.org irc: penguin42 on freenode | matrix: penguin42 on matrix.org | mastodon: penguin42 on mastodon.org.uk